The rise of generative artificial intelligence is beginning to reach one of the most consequential gateways in science: research funding. A new study from the Northwestern Innovation Institute finds that proposals showing stronger signs of AI-assisted writing were more likely to receive support from the National Institutes of Health, while also tending to align more closely with ideas that had already been funded.

The findings suggest that AI may offer researchers a practical advantage in preparing competitive proposals, while also raising broader questions about whether these tools could gradually steer funding systems toward more conventional lines of inquiry.

The study speaks to two open questions in the field. First, when AI helps researchers become more productive, is that because it makes it easier to articulate and package ideas, or because it actually speeds up the research itself? Second, has AI's influence already reached further back than most people realize, shaping not just the papers scientists publish but the funding proposals they submit in the first place?

Federal agencies such as the NIH and the National Science Foundation help determine which scientific ideas receive public support, which researchers are able to pursue ambitious work and which fields gain momentum. Because those decisions shape the future direction of discovery, even subtle shifts in how proposals are written, evaluated and selected can have lasting effects across the research ecosystem.

Yet while large language models such as ChatGPT have rapidly entered classrooms, offices and laboratories, far less attention has been paid to how they may be influencing the grant process itself. Proposal writing is often one of the most time-consuming parts of academic life, and AI tools can reduce that burden by helping draft language, summarize prior work and improve organization.

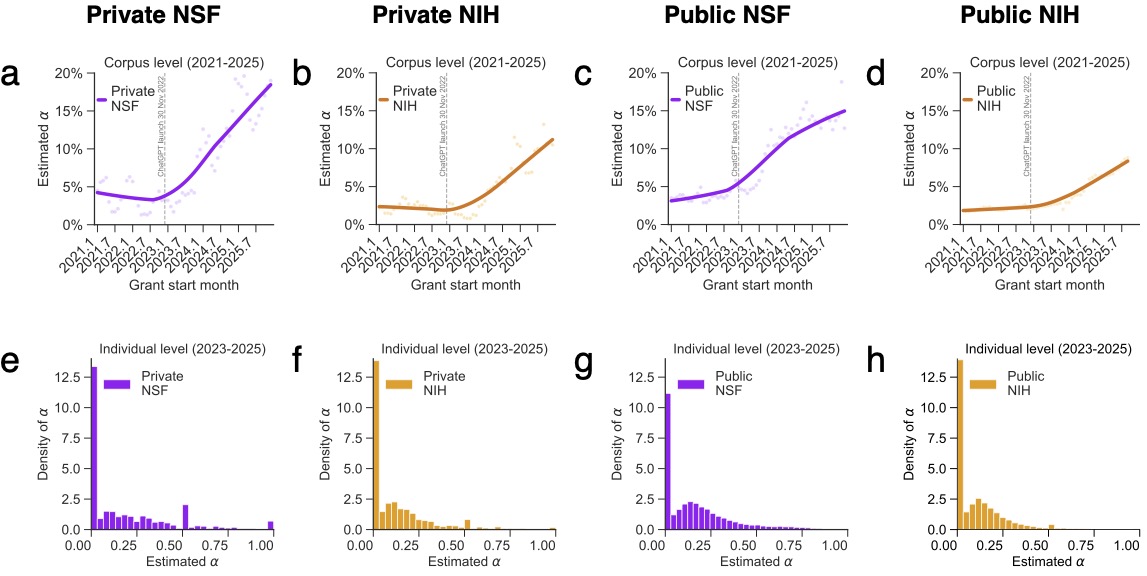

To examine how those tools may already be affecting funding outcomes, researchers at Northwestern Innovation Institute analyzed confidential proposal submissions from two major U.S. research universities together with the full population of publicly released NIH and NSF awards from 2021 through 2025. The combined dataset —made possible in part through Bridge, a collaborative initiative at the Innovation Institute that integrates research, funding and innovation data across partner institutions — offered a rare window into both funded and unfunded proposals at the earliest stage of the research pipeline.

Signs of AI-assisted writing rose sharply beginning in 2023, shortly after generative AI tools became widely available. At NIH, proposals with higher levels of AI involvement were more likely to receive funding and went onto produce more publications. But that productivity gain came with an important qualifier: the additional output was concentrated in ordinary papers rather than the most highly cited work. AI-assisted grants produced more research, but not necessarily more breakthroughs.

Across both agencies, proposals with stronger AI signals also tended to be less distinctive from recently funded work. Crucially, the study found this reflects genuine shifts in what researchers are proposing, not merely how they are writing — when the researchers held scientific content constant and appliedAI rewriting to existing abstracts, the semantic position of those proposals barely changed. The convergence is happening at the level of ideas.

These findings directly address both open questions. The productivity gains — more publications, but not more breakthroughs, and only at NIH, suggest that AI is primarily lowering the cost of communication rather than accelerating scientific execution. And by observing confidential, unfunded proposals alongside funded awards, the study shows that AI's influence is already operating upstream, reshaping how ideas are articulated and positioned before they ever reach publication.

No comparable funding or output advantages were observed at NSF. The contrast suggests that AI's effects depend heavily on institutional context and review norms. One possibility is that NIH's emphasis on incremental, executable projects makes AI-assisted drafting particularly effective at conforming to established templates, an advantage that does not translate as cleanly to NSF's broader portfolio of outputs.

The study points to a deeper policy tension. If AI tools help applicants optimize proposals around language and structures that have succeeded before, they could quietly reinforce existing funding patterns, benefiting efficiency, but potentially making it harder for unconventional or high-risk ideas to standout, even as agencies emphasize the value of high-reward research.

For universities and funding agencies, the question is not simply whetherAI improves writing quality. It is whether widespread use of these systems could subtly reshape how scientific ambition is presented and ultimately rewarded.

As generative AI becomes more embedded in academic work, its influence may extend far beyond drafting assistance. It may increasingly shape which ideas receive resources, which projects move forward and how the next generation of scientific breakthroughs begins.

Read the paper — The Rise of Large Language Models and the Direction and Impact of US FederalResearch Funding